Class: kafka — integrates with Apache Kafka . Sources consume topics and publish into a PADAS stream ; sinks subscribe to a stream and produce records to a topic.

Create and edit under Sources Sinks Advanced Settings may expose more runtime tuning depending on deployment and permissions.

Source and sink behavior

Role Behavior Source Kafka consumer : reads topicbootstrap_serversgroup_idstream . Sink Kafka producer : reads from the subscribed stream , publishes to topicconfig. Streams Processing upstream of the sink must publish to the stream id the sink consumes; the source writes the stream tasks attach to (Streams ).

Required fields

Every connector row

Field Required Notes nameYes Display name; id derived from it. classYes Must be kafka. streamYes Resolved stream id after Auto Create Stream or manual pick. typeYes source or sink from the screen used at create time.configYes Class-specific object; see below.

Class kafka — required configuration

Setting Required Notes bootstrap.servers / bootstrap_serversYes Broker list (UI often shows dots; stored key is commonly snake_case ). topicYes Topic to consume (source) or produce (sink). group.id / group_idSource only Consumer group; omit for sinks.

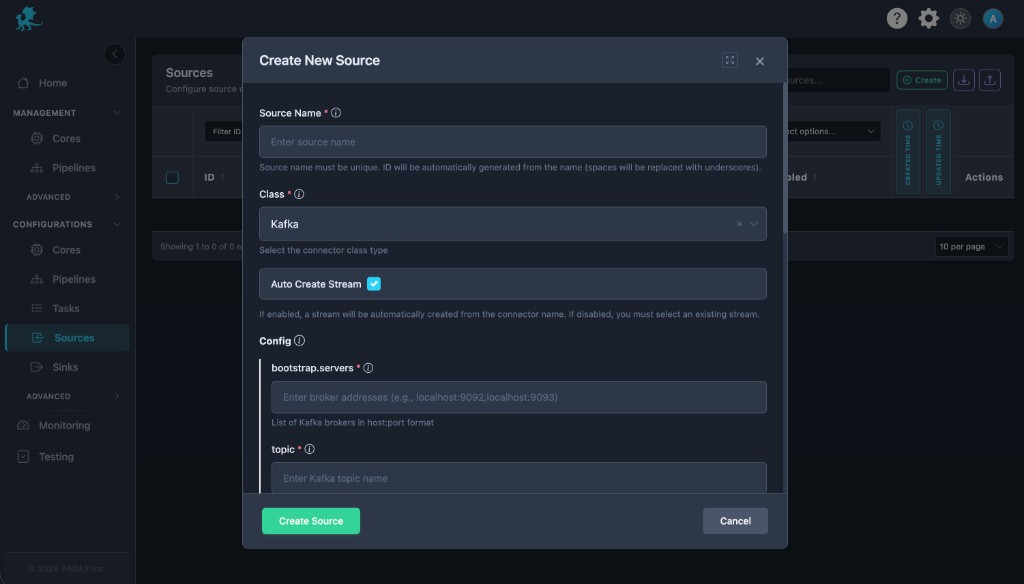

Create connector

Open Sources Sinks Create .

Set Class to Kafka , set name, stream behavior, and Enabled .

Enter bootstrap brokers and topic ; for a source , set consumer group .

Expand Common configuration for SASL/TLS, consumer or producer tuning.

Save , then wire the stream into tasks / pipelines .

Source (UI)

The Kafka source connector form. UI field Connector setting bootstrap.servers (required)bootstrap_serverstopic (required)topic

Sink (UI)

The sink form reuses the same broker and topic fields; layout matches the source modal (titles differ).

The Kafka sink connector form (same Config fields). Configuration

Cluster and topic

bootstrap_serverstopic

Consumer (source)

group_id

Security

authenticationtls

Throughput

bufferworkersbatch

Runtime behavior

Connectors start after Core deployment; Disabled connectors do not read or write Kafka.

Source consumers commit offsets according to Core; sink producers respect acks and retries from config.Dedicated consumer groups per PADAS source avoid fighting other applications across restarts.

Use a unique group_id per PADAS Kafka source where possible.

Align sink batch sizes with message.max.bytes

Monitor lag and rebalance events when scaling workers or changing group_id.

Related pages